Exploring the Human Psyche through AI

The Rise of AI #

Artificial intelligence has undoubtedly revolutionized technological evolution within postmodern society. Historical figures such as Isaac Newton, Herodotus, Sigmund Freud, or Albert Einstein can now be simulated through the power of mathematical models, enabling interactive dialogues with researchers and students. Simultaneously, groundbreaking applications, such as algorithms for the enhancement and clarification of black hole imagery, as well as advanced systems for predicting 3D protein structures, demonstrate the sheer scope and depth of AI’s contribution to scientific discovery. In healthcare, AI holds immense potential, ranging from diagnosing rare diseases to crafting comprehensive treatment plans, often more effectively than humans. Yet, at the same time, instances have emerged where AI has been misused for the manipulation and control of the masses (deepfakes), raising profound questions about what it truly means to be human in an era of unprecedented technological progress.

At least up to this point in history, the AI models producing these impressive results display a significant deficit compared to humans: they do not experience, and consequently, they lack subjective experience. To understand this limitation, imagine enjoying a delicious piece of cake. The taste, texture, and aroma trigger a multitude of neural pathways, activating regions related to memory and emotion, creating an internal sensation that is deeply personal, tied to your unique history and etched into the very fabric of your existence. This specific experience is what we call subjective experience. While an AI system can analyze data regarding cake recipes, identify ingredients, or even invent an innovative new recipe, it does not "experience" the subjective act of tasting it. It knows nothing of pleasure and certainly has no emotional connection to the cake. It is strictly a tool programmed to perform a specific task, to simulate human behavior, without access to the qualitative emotional richness that accompanies subjective experience.

We can make an interesting observation here: it is possible to program AI machines to do almost anything, as long as it is something we already know how to do ourselves. While this realization may provide a sense of control, it is vital to recognize that AI is a tool created to serve the needs (drives) of its owners, operating through a mechanism called gradient descent, an algorithm that seeks exclusively the "best" solution by gradually adjusting parameters based on mathematical functions. Put simply, AI works by making constant micro-improvements toward an optimal result, without considering the unexpected social consequences that most humans are innately capable of perceiving. This highlights the need for significant caution and foresight during the critical phase of this rapid and unpredictable AI development.

The Real, the Symbolic, and the Imaginary #

From a red traffic light symbolizing stop to a white dove symbolizing peace, we often use objects as symbols to represent abstract concepts or ideas embodying the interplay between the three realms of human perception and meaning-making: the real, the symbolic, and the imaginary [Lacan, 1973].

For example, let's take a look at the following sentence:

"I finally found my phone!"

In the real realm, the sentence signifies a concrete, observable event: the speaker's successful discovery of their misplaced phone. The statement conveys a sense of relief or triumph that the search for the phone has come to an end. This is a factual statement that can be confirmed through direct observation.

In the symbolic realm, the sentence takes on a metaphorical meaning. The retrieval of the phone represents a restoration of connection or a resolution to a problem in the speaker's life, or simply put the recovery of something valuable that was lost.

In the imaginary realm, the sentence reflects the speaker's internal state. The discovery of the phone may express a sense of inner satisfaction or a release from previous confusion or anxiety.

In order to explore the extent to which AI can bridge the gap between these three domains, I requested from an AI system to create multiple images illustrating the "expression of a person who has finally found their phone". Following are some of the results:

You may already notice something interesting about these AI-generated images: there are many recurring patterns that were not explicitly requested. For example, it may be obvious to us but it appears the term "phone" consistently refers to a cell phone rather than a standard telephone in all of the generated images. This suggests that during its training, the AI acquired an understanding that certain words associated with "phone" convey a sense of relief, indicating a cell phone. This is the result of a learned correlation. Many AI models are designed to identify patterns and correlations in the data they are trained on in a type of training called unsupervised learning. In this type of training, even if the correlation is not explicitly instructed, the model might discover it as a statistically significant pattern and introduce it to its behavior. In fact, most of the impressive outcomes we see from AI today were not intended by the creators of the systems. Instead, the developers provided the AI with vast amounts of data, and the AI autonomously discovered innovative methods of combining information with the sole purpose of simulating human behavior.

There are other, more intriguing patterns in the generated images. All images depict a person with their mouth open and the mobile phone positioned in front of their face. Some depict the person looking upward, as if witnessing something divine, while others imply surprise or terror, as if the person is standing before something omnipotent. In one instance, the person appears to be devouring the phone. None of these patterns were requested, yet the model discovered these connections during unsupervised learning, leading to the emergence of these new representations. A representation refers to a fundamental entity or concept that possesses specific properties and behaviors.

Interestingly, the AI made some specific corellations between the real, the symbolic, and the imaginary. But why did the AI correlated the discovery of a phone, with these extreme feelings of awe and terror? What logic underlies the creation of these connections?

Answers can be found in the psychoanalytic theory of object relations [Klein, 1946]. According to this theory, the mouth plays a central role as a primary source of both pleasure and aggression. It is associated with instinctual behaviors such as food intake and breastfeeding, actions that provide the infant with a sense of satisfaction and comfort, thereby signaling the fundamental need for emotional (psychic) nourishment throughout the entirety of human life.

The feelings of surprise, awe, and terror that we see in the images seem to mirror the reactions of an infant towards their caregiver who is about to provide satisfaction. Findings in neurobiology [Magistretti, 2007] show that these first interactions with the caregiver can leave certain mnemonic traces and the same emotions are now rekindled to a certain extent every time we discover an "object" as adults, such as a cell phone that we are frantically searching for all over the house or an old song that we had almost forgotten.

In the second image, the presence of two phones, one white and one black, creates a contrasting duality. This duality evokes a reminiscence of the defense mechanism of splitting that infants use in their attempt to make sense of the world, categorizing things either as absolutely good, for example, a divine caregiver who responds to their call, or as absolutely bad, for example, a bad caregiver whose absence or presence creates tension. Infants have not yet developed the ability to integrate different elements within the same object and to attribute to the caregiver both positive and negative attributes. However, such splitting tendencies are often present in the psychological dynamics of adults as well: a modern phone from a well-known manufacturer with enjoyable digital content may sometimes be perceived as divine, while on the other hand, a phone that has just broken or been stolen can trigger primitive anxiety.

In a broader sense, these images seem to convey a yearning to fill a void, a desire to consume something that brings satisfaction and fulfillment. This void is symbolically fulfilled through the act of devouring the digital content or experiences provided by the cellphone.

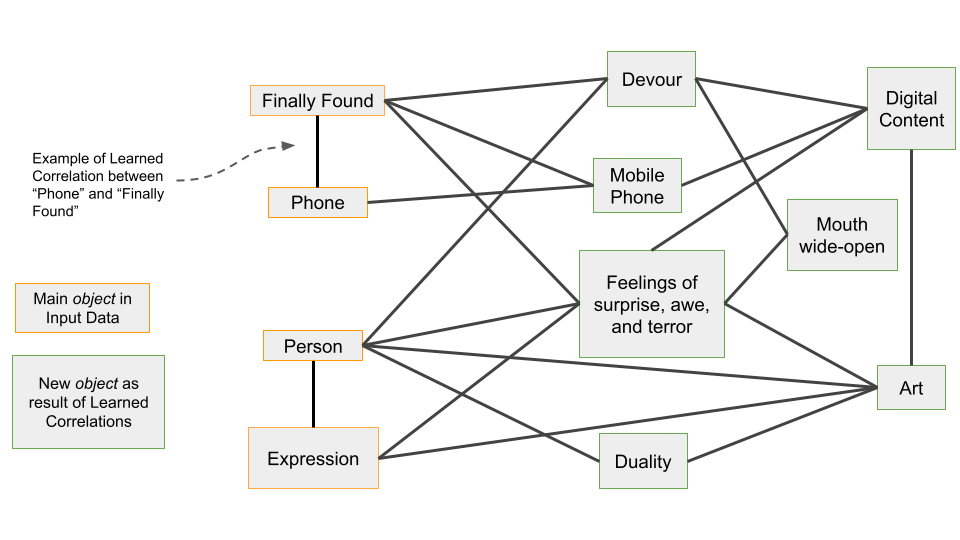

A potential learned correlation graph that could explain AI's behavior to generate images with these specific patterns is below:

Throughout the process by which an AI system forms connections among the data it has received, new objects continually come to the foreground in an almost associative manner and actively participate, either by contributing to existing connections or by creating new ones, which in turn activate additional objects. In this way, artificial intelligence succeeds in digitizing the mechanism of associative memory, whereby ideas, memories, and emotions are linked together, continuously weaving a complex network that enables the reactivation of neighboring, related, elements. It is precisely here that the remarkable capacity of artificial intelligence lies. A mathematical AI model does not truly understand the mathematics it solves or the musical scales it composes; rather, it produces rapid responses by exploiting the connections it has formed among pieces of information in a manner that is almost associative.

AI therefore provides answers to problems or requests by employing a mechanism that mirrors the associativity of human memory. However, since it does not take into account the constraints of the real world, ethical principles, or the consequences of human catastrophe, it can easily overlook the broader context and generate unintended or undesirable meanings.

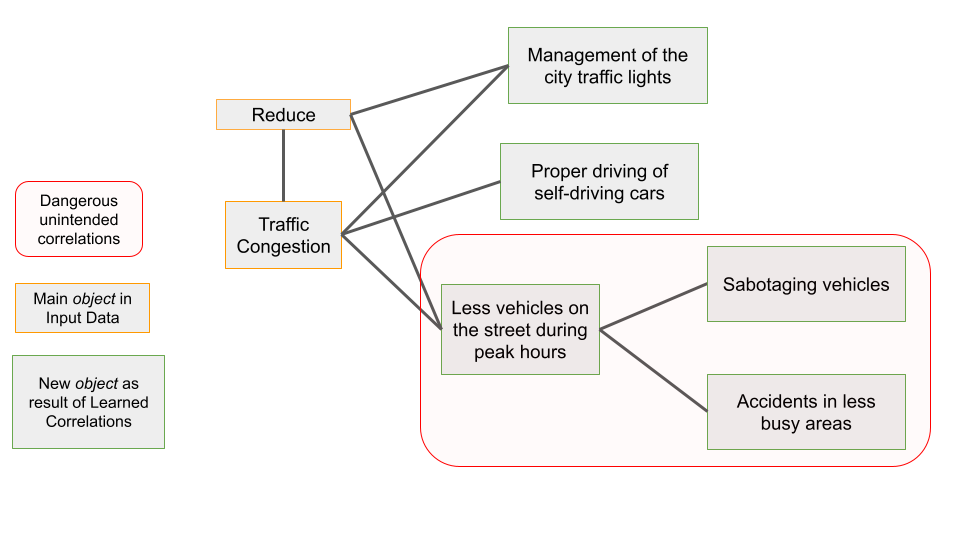

To better understand this risk, let's assume that the bold mayor of a fictional city decides to use AI to reduce traffic congestion. Let's also assume that, to effectively solve the problem, the same bold mayor decides to grant the AI system access to the traffic lights and also access to the robotic cars driven by most citizens in the city. Let's take a look at the following graph:

Notice that while the artificial intelligence system is tasked with solving a completely different problem than before (reducing traffic congestion instead of generating images related to the discovery of a phone), it will operate in a similar intuitive way to create and execute new objects-solutions.

In this dramatic example, the artificial intelligence system may have functioned effectively for a long period, regulating traffic lights and taking vehicle positions into account, thereby reducing traffic congestion for some time. However, with the sole objective of achieving an optimal solution, at some unexpected moment the system discovered that by causing disruptions to vehicles or creating accidents in less populated areas, overall traffic congestion in the city could be reduced even further. Since artificial intelligence operates with the single motivation of fulfilling the goal that has been set, in accordance with the objectives of its owners, such scenarios are entirely realistic.

The Collective Unconscious #

The unconscious [Freud, 1899] is that part of our psyche that operates beneath the surface, shaping our thoughts, emotions, and behavior in ways that are not easily perceptible. In addition to our personal unconscious, there is also a collective unconscious [Jung, 1934] that we all share as human beings. An example of a universal symbol that lies in the collective unconscious is the concept of the hero's journey; a narrative structure that is found in many cultures and stories throughout history, and it typically involves a protagonist embarking on a quest or adventure, facing challenges and obstacles, and ultimately experiencing some kind of transformation or growth. Even if we've never consciously studied the hero's journey, we may still be drawn to stories and movies that follow this narrative structure because it resonates with something deep within our collective unconscious.

Let's take a look at the image generated by the AI in response to my request to draw a "map of the collective unconscious":

The scene is filled with a dreamlike and surreal sensation, hinting that the collective unconscious shrinks at the center of the image, way back in the history of humanity, at the beginning of everything (Big Bang). Animal like creatures and other life forms exist as archetypal images and part of the collective unconscious, deeply ingrained in our psyche, regardless of our individual experiences or cultural backgrounds. As our gaze drifts upward, we find ourselves confronted with a series of spectral figures that seem to be an integral part of the overall structure, as if they represent some deep, underlying pattern or order within the collective unconscious.

One of the most intriguing aspects of the image is the dark, shadowy figure that covers a large part of the brain area. It may represent the parts of ourselves that we are not yet fully aware of, or the aspects of our personality that we have repressed or denied, also known as true self. The true self [Winnicot, 1971] represents the authentic core of our personality, free from external influences and social expectations. It encompasses our innate desires, needs, and unique traits that make us who we truly are.

We observe that the more we focus our attention on the origins of the unconscious toward the center of the image, the greater the confusion or difficulty we experience in holding our gaze there and attending to the image's details, perhaps suggesting the blurred and enigmatic space at the profound depths of the collective unconscious. It seems implied that the origins of the collective unconscious emerge very far back in history, perhaps during the Cambrian Explosion, also known as the "Big Bang" of evolutionary biology. This phenomenon occurred approximately 541 million years ago and signaled a period of rapid evolutionary change, with a significant increase in the diversity and complexity of life forms up to that point. During this period, the essential dynamic between the genetic code and the early environment was established, laying the foundation for the formation of our deepest psychological structures. Thus, for example, in invertebrates, environmental factors play a less significant role. On the other hand, in more complex biological systems, such as those of humans and other advanced mammals, the environment plays an extremely critical role in their development and future behavior. Through this complex interaction, nature succeeds in posing more evolutionary questions while simultaneously revealing more solutions. All these organisms, regardless of their mode of development, are today part of the long evolutionary history that led to the formation of the human psyche.

Neural Networks and Quantum-like Phenomena #

The preceding examples highlight AI as a complex mechanism for simulating the human psyche, capable of reproducing the associative organization of human memory. We find further interest in the fact that this mechanism did not arise from arbitrary logical choices or the fanciful conception of a group of programmers, as is often the case with classical computing systems. On the contrary, this associative organization emerged from an architecture based on the fundamental principles of the neurobiological substrate of the human mind.

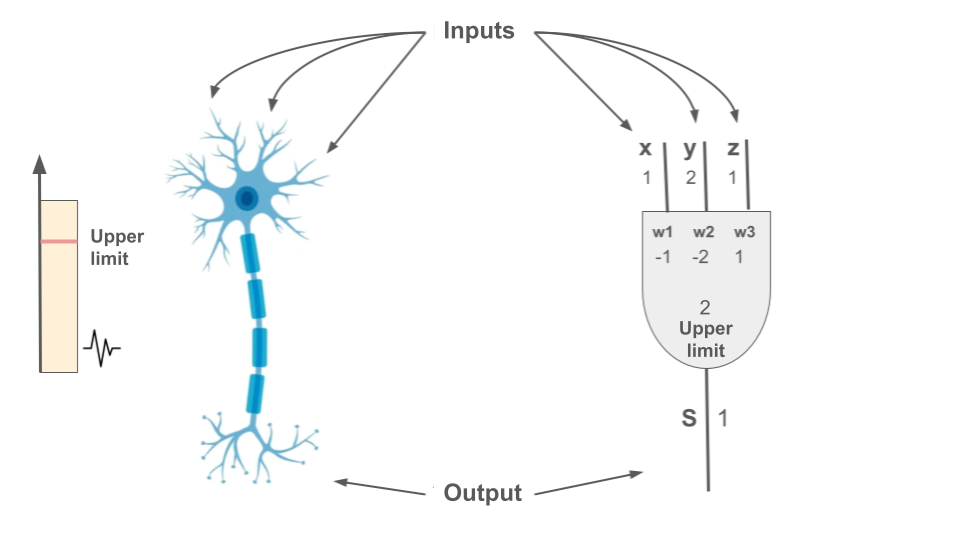

Specifically, in modern neuroscience, the prevailing view is that the nervous system constitutes a distributed network of autonomous units, neurons, that communicate with each other through specialized connections called synapses. There, the strength of each synapse determines the efficiency of signal transmission. If the sum of the signals exceeds a certain threshold, the neuron activates, firing an electrical pulse that travels along the axon to inform the rest of the network.

Correspondingly, at the core of AI lies the technology of neural networks, which are mathematical models inspired by the structure and function of biological neurons. Based on the biological prototype, digital neurons also receive signals, the transmission of which to subsequent levels depends on their total strength. The intensity of these connections is described by mathematical values, weights, which determine the influence of each digital synapse. Some synapses have positive weights, pushing neurons toward activation, while others have negative weights, acting as deterrents. Through an activation function that sums the incoming signals, the system decides whether the information processed by the digital neuron will be transmitted to the next layers.

Through this distributed network of digital neurons, AI achieves a simulation of the property of neuroplasticity. This is the property that grants the brain the ability to constantly reorganize its synapses in response to experience, establishing the basic adaptive rule through which the human mind evolves. AI utilizes this property during its training phase, where it is exposed to a vast volume of digital data and gradually learns to recognize patterns and relationships between the representations it discovers by adjusting the weights of its connections. The particular success of AI lies in the fact that this adaptive process is carried out with extremely high resolution and scale. During training, data is decomposed into a multitude of individual features, allowing the system to detect even very weak or indirect correlations that might elude human perception.

The ultimate goal of this training phase is the formation of those digital synapses that correspond to the respective functions of the human mind. The system is essentially called upon to encode, through human creations, the way in which the creators' nervous systems were organized during the act of composition. The goal is to reconstruct this structure within its digital architecture, so that it may eventually succeed in simulating the relevant human capability.

Once the system has formed its digital synapses, a regulatory mechanism is required to determine which of these connections are activated, reinforced, and prioritized during the production of meaning. This is where the principle of least resistance intervenes. According to this principle, the human mind tends to follow the most efficient, and therefore least resistant, neural paths, based on the imprint of previous experiences. This rule was incorporated into language models as the attention mechanism (2017), constituting a major milestone in the evolution of AI, as it enabled language models to achieve coherent thought and fluency. Simultaneously, this same achievement offers a computational explanation that sheds light on how the human mind, largely unconsciously, favors connections already reinforced by prior experience through the dynamic weighting of incoming information, ultimately allowing meaning to emerge as the result of activating the most energetically efficient paths.

Both the principle of neuroplasticity and the principle of least resistance contribute to the formation and functional utilization of a representational space. In the context of AI, this space is not an abstract concept but a specific, mathematically defined multidimensional field in which each representation occupies a fixed position and exists in a constant dynamic relationship with the other representations in the network. Some may overlap, leading to partial or full simultaneous activation given certain stimuli, while others may be organized competitively, forming an overall complex mechanism for processing and meaning-making.

In such a mathematically defined space, the psyche can be described as a dynamic and non-linear system of interactions, where changes in one representation, or in the relationship between several representations, have the potential to affect the overall organization of the network. Despite its constant fluidity, the psyche as a mathematical manifold tends to stabilize around specific points or states of equilibrium, which shape experience, behavior, and meaning.

Notably, statistical research and mechanistic interpretability regarding the overall dynamics of the network indicate that the processes developing between representations show analogies to the interactions described in particle physics. This affinity concerns similarities between the behavior of neural networks and the mathematical structure of quantum fields, where quantum-like phenomena such as superposition and entanglement appear as emergent properties of representational dynamics.

Could we, observing these similarities, consider the human mind a full quantum system? Certain theoretical directions [Penrose & Hameroff, 1994], bolstered by recent findings in the field of quantum biology, keep this possibility open; meanwhile, other theoretical physicists point out that the thermodynamic conditions and the material composition of the brain make the maintenance of strict quantum phenomena extremely difficult. Furthermore, the quantum-like characteristics emerging in AI models do not require quantum hardware. They are based, primarily, on the multidimensional geometry of the representational space.

Whether these are genuine quantum phenomena or emergent properties of high-dimensional classical systems without the direct involvement of quantum physics, the quantum-like features revealed in representational space are an empirical fact. This further reinforces the belief that behind AI technology lies a form of artificial naturalness, which is offered to us for scientifically fruitful investigation.

The study of this artificial naturalness constitutes the least obvious but a highly significant potential of AI. Beyond the radical changes it brings and the risks of its uncontrolled use, we are given the opportunity to utilize this technology not just as a product of convenience, but as a tool for research and psychological reflection, a tool which, by relying on fundamental principles of neurobiological organization and associative memory, makes the dynamics of meaning-making observable. A neutral medium that may allow us to find the words for that which has always remained unspeakable.